GSA SER Verified Lists Vs Scraping

Understanding the Core Difference

The world of automated link building revolves around efficiency and success rates, and when using GSA Search Engine Ranker, your choice of targets can make or break a campaign. The debate often centers on GSA SER verified lists vs scraping. Both methods aim to provide the software with URLs where it can attempt to post backlinks, but the philosophy, time investment, and results differ dramatically. A verified list is exactly what it sounds like: a pre-tested collection of URLs known to accept submissions from GSA SER. Scraping, on the other hand, involves harvesting fresh URLs from search engines, footprints, and competitor backlinks, then feeding them into the software without any prior verification.

The Reliability Factor of Verified Lists

When you purchase or acquire a vetted database, you are buying a shortcut to a higher success rate out of the box. The reason many experienced users lean on the GSA SER verified lists vs scraping debate is simple: verified lists remove the guesswork. These URLs have already passed platform detection, meaning the software knows exactly which engine to load. You skip the step where GSA SER wastes time on pages that are not even registration portals. This leads to immediate posting, less bandwidth consumption, and a much lower CPU load because the software isn't analyzing dead ends.

The Maintenance Dilemma

A major drawback of relying solely on verified lists is the temporal decay. Link platforms die, domains expire, comment sections close, and security tokens change. A list that delivered a 90% verified rate last month might deliver a 30% rate today. Maintaining a static list requires constant re-verification, a process that takes just as long as scraping fresh targets. If you do not have a system to continuously clean and update that verified database, you are eventually just spinning your wheels on dead URLs.

The Freshness Advantage of Scraping

Scraping dynamically collects targets based on real-time search engine results. This is the heartbeat of the GSA SER verified lists vs scraping conversation for those who prioritize volume and freshness. When you scrape, you find websites that are currently active and indexed. You bypass the problem of list decay entirely because every URL is pulled moments before the posting attempt. This method can uncover untouched platforms that no other marketer has poisoned with spam, potentially offering higher conversion rates and a cleaner link profile.

Footprint Mastery and Relevance

Efficient scraping relies heavily on the quality of your search footprints. If you master platform-specific footprints, scraping allows you to target niche-relevant sites. Instead of a generic verified list that contains every niche under the sun, you can scrape for health blogs, tech forums, or local business directories. This contextual targeting is almost impossible to replicate with a pre-packaged list. In the GSA SER verified lists vs scraping analysis, advanced users often prefer scraping because it grants them control over contextual relevance, which is becoming increasingly crucial for tier-1 link safety.

Time, Resources, and Infrastructure

Scraping is not free in terms of resources. It demands proxies, captcha-solving credits, and patience. The software must query search engines, parse results, and filter out duplicates before it can even attempt to post. You may burn through 10,000 captcha credits just to find 100 functioning targets. Verified lists, by contrast, plug directly into the posting engine. The efficiency gain is tangible: you can start a campaign and see links being built within seconds. For users with limited server resources or tight proxy budgets, the lower overhead of a verified list often wins the initial stages of the GSA SER verified lists vs scraping decision.

Success Rate vs. Unique Domain Count

The metrics you care about should dictate your choice. If your KPI is strictly raw verified hits per minute, a good verified list annihilates scraping. You might achieve an 80% submission success rate immediately. However, if your KPI is the absolute number of unique referring domains over a month, scraping eventually pulls ahead. A verified list has a finite ceiling; once you have posted to every URL that still works, you cannot generate new domains without scraping or buying a fresh list. Scraping feeds the software an infinite stream of potential new sites, ensuring that your domain count never plateaus as long as you keep the engine running.

Combining Both Methods for Maximum Output

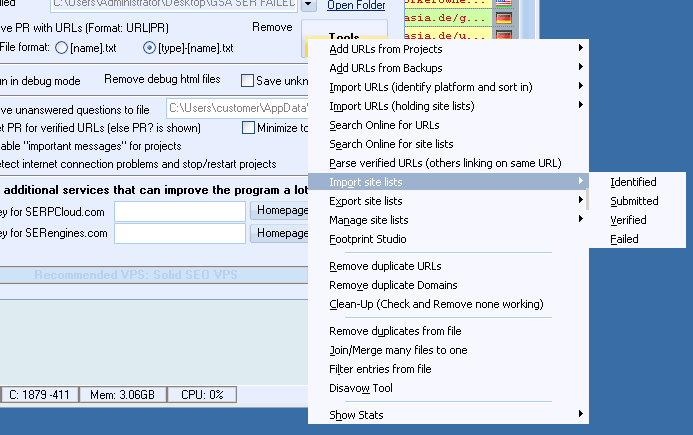

The most effective GSA SER setups rarely choose one side of the GSA SER verified lists vs scraping argument permanently. Instead, they hybridize. Use verified lists to saturate the engine immediately, keeping posting speed high and resources low. Set the scraped targets to trickle in the background. This hybrid approach ensures you always have a buffer of known-working sites while continuously exploring the web for new inventory. You get the instant gratification of verified links and the long-term growth of fresh discovery. By letting GSA SER simultaneously post to verified URLs and scraped candidates, you eliminate the weaknesses of each method.

Making the Right Choice for Your Project

If you are running quick test campaigns, building massive tier-3 link wheels, or working with a slow internet connection, sticking to high-quality verified lists is the pragmatic way to go. If you are building a tier-1 contextual network or need to extract maximum domain diversity, scraping with tightly controlled footprints is non-negotiable. Ultimately, the keyword GSA SER verified lists vs scraping does not represent a war but a strategic balance. Understanding the decay rate of static lists and the resource cost of dynamic discovery will allow you to tune your campaigns for both speed and sustained growth.

SER verified lists